Hello! I’m cw-ozaki from the SRE Group.

This is a continuation of the Part 2 article. Assuming that migrating from EC2 to Kubernetes is fine, I will discuss how Chatwork’s Kubernetes clusters are structured and how the updates are strategized.

These topics don’t come up very often. I hope this can be an opportunity to hear a little more about how other companies are doing it.

- Chatwork’s Kubernetes Cluster Structures and Update Strategy

- Kubernetes Cluster Update Interval

- Single-tenant vs. multi-tenant

- In-place vs. Blue/Green Deploy

- Summary

Chatwork’s Kubernetes Cluster Structures and Update Strategy

As of November 2020, EKS is used for Chatwork to construct the Kubernetes cluster.

This Kubernetes cluster takes the multi-tenant, single cluster structure. And we have three environments: tests, stages, and productions.

For this cluster, the applications and the managing teams are below.

- Applications for cluster management: SRE Team

- Applications made with Scala (six applications): Scala Team

- Applications made with PHP (six applications): SRE Team (scheduled to be assigned to the PHP Team)

The Kubernetes cluster itself is scheduled to be renewed every three months, starting up a new cluster in Blue/Green deployment strategy, to transfer each application individually to the new cluster.

In other words, we rush and make a new cluster for the time being. Then, we rush and transfer applications. If there is an issue, we put it back!!! That’s our style.

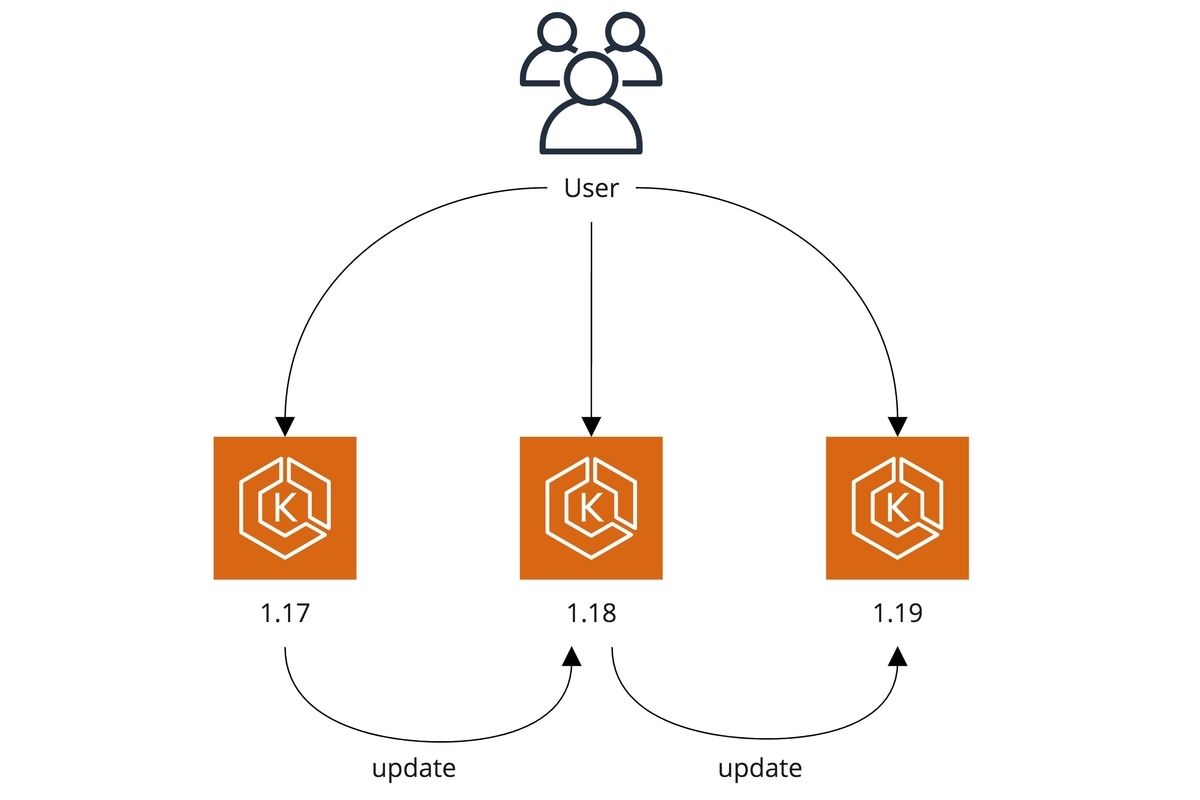

Kubernetes Cluster Update Interval

With EKS, a new version is distributed about every three months and reaches an end of support before about a year.

This cycle is almost the same as Kubernetes, so even if EKS is not being used, you need to follow this cycle.

Therefore, when adopting Kubernetes, it is necessary to choose either complying with having the latest version every three months or update before a year passes.

The areas below lean toward personal interest, but Chatwork updates the clusters every three months considering below.

- It is possible to introduce a new function at an early stage.

- When updates are few versions apart, the changelogs get too much to track.

- When updating just before EOL, the update schedule changes depending on the changed section, so there is a possibility we can't finish the upgrade before the EOL.

Updating every three months here means that you have to work four times a year, and taking time in each update here means the next version will be coming out before you finish it. So, it is necessary to automate the processes, and even when there is manual work, it is necessary to consider the timeline and make it the minimum.

In other words, Chatwork somewhat shows the commitment to running the Kubernetes. Having said that, things are still chaotic, so if you are confident in this field, we eagerly await your application to join our company.

Single-tenant vs. multi-tenant

The selection of single-tenant or multitenant depends on the development team structures and policies.

Single-tenant

Pros

- It is possible to minimize the Blast Radius

- Because it is a single cluster/single application, there is no need to consider the impact on other applications

- Even in the event of cluster failures, the affected scope can be enclosed into a single application

- Management cost is reduced when looking at a single cluster as the cluster size gets smaller

Cons

- Multiple clusters are formed with multiple applications, increasing the overall management cost

- In particular, when making a team that manages clusters cross-field, running cost for maintenance would be really high unless you have really well-automated system for updating clusters

- It is hard to difficult to optimize the cost compared to multi-tenant

Multi-tenant

Pros

- Only a single cluster is required, so the management is easy

- Multiple applications with multiple sizes go on, so it is easy to optimize the number of pods on the nodes to optimize the cost.

- There is only one control plane, so the cost is optimized in this sense as well

Cons

- Other applications may have an impact on changing some one application

- The scope of impact is wide if the Kubernetes cluster dies

Summarizing the pros and cons of each, the key phrases are the scope of effect and management costs.

The scope of effect should be minimized, so a single tenant should be selected if the management cost can be afforded.

Now, what kind of situation is being able to pay for the management costs in selecting single tenant?

- It is highly automated and constructed to the point where you can treat multiple clusters as a single cluster.

- KaaS

- Installation to all clusters using GitOps

- Solid infrastructure for logs and metrics

- Inspection using Gatekeeper, etc.

- Human resources supporting the Kubernetes cluster for each application is available

- e.g., SRE or infrastructure engineer

- otherwise, the application developer has expertise in Kubernetes

- Abandon management and force the application developers to do it

Chatwork is currently working hard on 1. For 2., the number of employees super skilled in handling SRE’s Kubernetes is three, but there are 12 applications, so there is not enough time at all. Only a few application developers are super skilled in handling Kubernetes, capable of investigating and dealing with problem occurrences.

It is fun to force someone to do it like 3., but that will obviously be a source of ill will in the future, so that’s not an option I would want to take (still, it may not be a bad option if you are focusing on agility at the beginning of the service, but you should not be selecting Kubernetes in the first case if that’s the case).

Therefore, Chatwork only has the multi-tenant single cluster option for the Kubernetes cluster. In the future, when the number of SRE increases, the development team structure changes, and the management costs become affordable, I want to move on to the single-tenant structure.

In-place vs. Blue/Green Deploy

For EKS, the control plane is managed. When the Kubernetes cluster is upgraded in place, it cannot be switched back.

Therefore, when updating the cluster in place, it is necessary to verify beforehand that there is no issue with manifest, behavior changes, and performance changes.

For single-tenant, the verification is only for a single application, so it is not hard. However, the cluster cannot be updated for multi-tenant until all of the applications are verified.

It is necessary to confirm the progress of all teams managing applications, so it is expected it would take longer until the cluster update completes.

On the other hand, when updating the cluster with Blue/Green deployment, it is necessary to run multiple clusters temporarily and regulate the routing of requests to applications. Still, the big advantage is that it can be switched back. Also, the application is switched per application, so from the SRE perspective, it is a big advantage to be able to leave matters to the application team once the cluster is prepared.

However, one point of caution is that with Blue/Green deployment, the management difficulty gets higher with applications that have stated.

For example, there is no issue with Postfix or Fluentd which buffers are not necessary once consumed. But for example, MySQL data or Akka Cluster which has restrictions to have a kind of actor in which only 1 actor exists across the system, it is necessary to consider how to transfer the cluster.

In the worst-case scenario, there is still the option to transfer after going into maintenance and suspending the service. Still, if the environment does not allow casual switches to maintenance, I think it is happiest to only put applications that can be switched online on Kubernetes as much as possible.

Chatwork selects a multi-tenant single cluster and updates the cluster every three months, so the only option is Blue/Green.

Summary

- Chatwork updates the Kubernetes cluster every three months!

- We run the multi-tenant/single cluster structure!!

- We update clusters using Blue/Green deployment!!!

I’ve discussed the Kubernetes cluster structure on Chatwork and update strategies.

Next time, I will discuss how to put PHP legend applications on this structure and update strategies.